Solver

From Sokoban Wiki

Contents |

Data structures

Positions on the board

The board is a two-dimensional area containing the objects of a Sokoban level. Hence, a natural data structure for storing the board is a two-dimensional array. But many solvers use a one-dimensional data structure for this task, numbering the board positions from 0 to n-1, where 'n' is the number of board squares.

Accessing the elements of a one-dimensional vector is easier and faster for a computer program than accessing the elements of a two-dimensional array, simply because the address calculation is less complicated. Using a one-dimensional vector has also the advantage for the programmer, that a square number can be stored in just one variable.

The board data structure can also include additional data, for instance flags for:

- Is a box frozen?

- Is the position a dead position?

Storing a state

In Sokoban a state consists of the positions of the boxes and the position of the player. Every other element of the board cannot change its position and therefore needn’t to be stored per state. Therefore a state can be stored by using: One integer array for storing all box positions. One integer for storing the player position. For a better memory alignment the player position and box positions can also be stored in one integer array together. The data structure usually consists of additional data fields like for storing information about the state like pushes needed to reach it or which box has been pushed last. Example data structure for Java: public class State { int[] boxPositions; int playerPosition; }

Transposition Tables

Solvers for the Sokoban game use a graph search as the search algorithm. The search creates a directed graph of states. In nearly every Sokoban puzzle many states can be reached through different paths in the search graph. In these cases the graph is cyclic and the search reaches specific states multiple times during the search. The search effort can therefore be considerably reduced by eliminating duplicate states from the search. A common technique is to use a large hash table, called the transposition table, which stores all already explored states. The transposition can also be used to store additional data per state – for instance the depth the state has been reached in during an IDA*-search.

Tradeoff between space and time

The transposition table may need to hold several million states. Hence, an efficient data structure has to be chosen for storing the states.

There are different approaches to address the high memory usage problem.

Limit the transposition table size

One solution for the high memory usage of the transposition is limiting the size of the transposition table. This makes the search algorithm a kind of hybrid between tree search and graph search. The advantage is the ability to search for solutions for larger levels due to the lower memory usage, while an obvious disadvantage is that it may lead to duplicated search effort.

Incremental storage of states

Instead of storing all box positions and the (normalized) player position for every state it’s also possible to store the data relative to another state. This divides the states in two types: States that store all box positions and the player position. States that store a link to another state and the difference to that linked state.

Example: A state consists of these data: State 1: box positions: 10, 13, 21, 33, 45, 47, 48, 49 player position: 17

Now another state consisting of these data has to be stored:

State 2: box positions: 11, 13, 21, 33, 45, 47, 48, 49 player position: 10

State 2 can be stored relative to state 1 this way:

State 2: link to: state 1 boxNo: 0 newBoxPosition: 11 Player position: 10

Normalizing the player position

Solvers not interested in finding solutions optimized for moves can consider two states equivalent if the boxes are at the same positions and the player positions are in the same player access area.

This can be implemented by storing only normalized player positions, e.g. the top-left reachable position instead of the actual position of the player.

This significantly reduces the number of states in the search tree.

Calculation of player reachable positions

The reachable player positions must be calculated very often during the solver search. Hence, an efficient algorithm is required.

Algorithm

In order to generate successor states from a specific state during the search the solver must know which side of which boxes the player can reach. Instead of calculating a path for the player to every side of every box separately it’s quicker to calculate all player reachable positions at once. This can be done by performing a simple Breadth First Search. The BFS marks all reachable positions. This can be done by using a boolean array where each bit represents a specific position on the board. For a better performance time stamps can be used to mark the reachable positions of the player instead of boolean flags.

Brute force

The first step we do is to code a routine that generates all possible pushes for a given level situation. In our level for the first push there are four possible pushes: up, down, left and right. All of these pushes are done and the resulting states are stored in a queue.

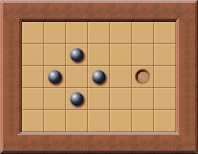

With only one push the box can be pushed to the following positions:

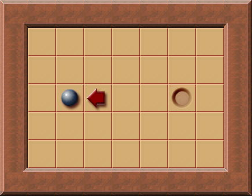

After this is done we take every of these saved states as new start state and generate all possible successor states. For example, this is one state than occurs after the first push has been made:

We take this state and generate all successor states of it (that just means we push the box to every possible direction again). Again we save every generate state in the queue.

Described as algorithm this looks like this:

This is done again and again:

- Add the initial state (the level begin) to the queue

- Take the first state of the queue

- Generating all successor states of the state we got of the queue

- Add all states we've just generated in the queue

- continue by step 1

Finally we reach the state where the box is located on the goal.

Pruning duplicate positions

The described algorithm can be improved a lot. One thing that can be improved is the following: At any time the algorithm pushes the box on square to the right. Then it adds this state to the queue. At some time it takes this state from the queue again and generates all successor states - including the state resulting by pushing the box one square to the left. This results in a loop: Pushing a box to the right, then back to the left, again to the right, ... we generate duplicate positions which slows down the search a lot. So what we have to do is avoiding duplicates states. To avoid duplicate positions we have to detect them. Therefore we have to store every state reached during the solving process. After a new state has been generated we have to check if it has already been generated before and if so we just discard it. This reduces the number of states to search until a solution is found a lot and therefore improves the performance of the solver.

New solver process logic:

- Take the first state of the queue

- Generating all successor states of the state we got of the queue

- Delete all successor states that have already been generated before

- Add all left states to the queue

- continue by step 1

Deadlocks

Due to the constraints given by the rules of Sokoban some states in the game are deadlocked. That means it isn’t possible to push every box to goal, anymore. In general, deadlocks can be arbitrarily complex and potentially include all the boxes. Hence, detecting all deadlocks is only feasible for very small levels. However, a lot of deadlocks can be identified. Detecting deadlocks can prune huge parts of the search tree and therefore is an important part of every solver.

See the Deadlocks section for an overview of some common deadlocks that may occur.

Pushes Lowerbound calculation

The ability to admissibly estimate the number of box pushes needed to solve a specific state is useful for the heuristic function used in a guided search - like for example A*.

The more accurate the calculated lower bound is the better it can guide the search. However, calculating a good lower bound is a CPU intensive calculation.

Especially for solvers that search push optimal solutions a good lower bound calculation is important. The lower bound can also be used as information whether a level has been solved, since a lower bound of 0 means all boxes are on goals.

Free goals count

A trivial lower bound can be calculated by counting the number of goals not occupied by a box. This calculation is very fast but the results are quite inaccurate. However, this calculation can be used to identify specific level types.

Example: If the initial state of a level has 20 boxes but only one goal not occupied by a box then this level belongs to a specific level type where most of the boxes are on goals right from the beginning. For such levels a lower bound calculation can often not guide the search as well as in other level types.

Simple Lower Bound

A simple lower bound calculation calculates the sum of the distances of each box to its nearest goal.

The distance from a box to its nearest goal can be calculated with various degrees of sophistication. The simplest method is to calculate the so-called Manhattan distance. A more accurate method is to use the minimum push distance from a box to its nearest goal. The program can precalculate the minimum push distance to the nearest goal for all squares on the board during initialization. That way, it's a simple and efficient table lookup to find the distance to the nearest goal for each box during the search.

Both of these methods have the notable property that they are so-called admissible heuristics, i.e., they never overestimate the true shortest solution. This means the lower bound estimate can be used for solving a level with a push-optimality guarantee.

Calculating a simple lower bound is fast and accurate enough for very small levels. It does, however, grossly underestimate the true lower bound in most cases. The simple lower bound ignores that all boxes must go to different goals, and it also ignores how the boxes interact with (typically block) one another.

Minimum Matching Lower Bound

The Minimum Matching Lower Bound algorithm calculates a minimum-cost perfect matching on a bipartite graph. The “cost” is represented by the push distance of a specific box to a specific goal. Each box is assigned to a goal so that the total sum of distances is minimized.

The matching can be calculated using the Hungarian method or the Auction algorithm, for instance. The complexity of this calculation is about O(N3), where N is the number of boxes and therefore are quite expensive calculation.

The benefits of this expensive calculation are:

- It produces much more accurate results than the Simple Lower Bound calculation.

- It provides the parity of the any solution, i.e. if the value returned by the algorithm is even, then the number of pushes in a solution is also even.

This makes it possible to skip every other iteration in an iterative deepening search.

- It can detect bipartite deadlocks.

Calculating the box distances

For calculating the pushes lower bound the algorithm needs the distances for all boxes to all goals. Calculating these distances can be done in different ways. Since the wall positions and goal positions never change, the distances for a specific box depend on:

- the player position

- the positions of the other boxes

The distances used for the Minimum Matching Lower Bound calculation can be precomputed before the solver starts.

No influence pushes

Tunnels

Having teached the solver to use the algorithms described above our little level is solved very quickly. Usually Sokoban levels are a lot more complicated. Therefore we have to search for further ideas how to reduce the number of states we have to generate during the solving process.

New example level:

Here we have a (still very easy) level containing two boxes. The solver takes the given state as initial state. Then it generates all possible successor states. This means: Pushing box 1 to every possible direction AND pushing box 2 to every possible direction. The solver doesn't know which push is the best for solving the level - hence it has to generate all possible successor states. This soon leads to an enormous number of generated states (at least in larger Sokoban levels). It would be a great advantage if we knew that only pushes of one box are relevant in a specific state.

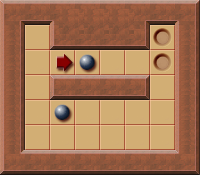

Let's assume the player has just pushed the box next to him one square to the right. What to do next?

The solver will consider every possible next push: all possible pushes of the higher box and all possible pushes of the lower box. But: The higher box is in a tunnel - the just performed push has been a "no influence push". The reason is: The squares the lower box can ever be pushed to stayed the same. There aren't any additional squares the lower box can reach, nor are there any squares the lower box can't reach due to the push of the higher box. The just pushed box has definitely to be pushed sooner or later. So there is no reason why not to push it further to the right immediately!

In general such a "no influence push" is always created when the situation AFTER the push looks like this (or any flipped / mirrored version of it):

Every time a push of a box results in such a situation the box can immediately be pushed one square further! This rule can reduce the number of states to be generated in some levels a lot.

PI-Corrals

This section describes an admissible domain-dependent move pruning technique for the Sokoban game called PI-Corral pruning.

PI-Corral pruning has highly attractive properties for a Sokoban solver program:

- It prunes a significant number of game positions from the search

- It steers the search towards positions with a certain class of deadlocks, thereby helping to detect these deadlocks early on, increasing the pruning even further

- It preservers push-optimality guarantees provided by the solver

PI-Corral pruning is similar to pruning due to tunnels: It identifies pushes that don’t influence certain parts of the board and then ignores all boxes that aren’t influenced by the performed push.

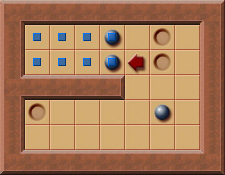

A simple example of a PI-Corral:

In this level the player just has pushed the box to the left.

Normally the solver now generates all possible pushes for all boxes including the box in the lower right corner. The push creates an area the player can't reach marked with a ![]() .

.

The boxes that are part of this area can only be pushed inside this corral (= on squares that are marked) with the next push. Hence, these pushes don’t have any impact on the rest of the level. The reachable player positions aren’t reduced and also the reachable positions of the box in the lower right part of the board aren’t reduced.

At least one of boxes at the border of the marked area must be pushed into that area sooner or later. This is always necessary when a box at the border or inside the area isn't located at a goal square, or when a goal square inside the area isn't filled with a box. In the current position, the player can make all the possible pushes which ever can be made into the marked area. Therefore, the solver just needs to generate the pushes for the boxes at the border of the marked area. The legal pushes for all other boxes on the board can be ignored.

This can reduce the pushes to be generated a lot - particularly when there are a lot of boxes in the level that aren't involved in the PI-Corral.

Parking

Many levels cannot be solved by just pushing one box at a time to the goal position.

Most levels are constructed in such a way that some of the boxes have to be specifically rearranged to make room for pushing other boxes.

In some cases boxes have to be actually pushed over a goal position to a “parking” position, from where they can then later be pushed to their final goal position.

Example:

File:Parking example1.png

YASS Solver

Sokoban solver "scribbles" by Brian Damgaard about the YASS solver

Limited search

Ideas by David Holland on computer solving by limited search are linked below. They aren't fully wikified yet as author has RSI. Features new concepts such as free space, shuffling, relocation and generalized deadlock detection. Sketches of algorithms included. Other new concepts such as goal traps with algorithms may be implemented in existing solvers. Author hopes other programmers will discuss and implement what they find relevant. Limited search as opposed to exhaustive search uses macros, heuristics and domain-specific knowledge to effectively search deeper in the tree of positions by not looking at every position. A new category of "Planner" plug-in is suggested for limited search to be tried when the Solver plug-in fails, indicating too large a tree. David Holland's computer Sokoban solving ideas